Google's breakthrough in AI compression technology has significant implications for the tech industry. The company's TurboQuant algorithm can reduce the memory usage of large language models (LLMs) by six times, while also boosting their speed. This achievement is crucial because LLMs require massive amounts of memory, making it difficult to develop and deploy them without breaking the bank.

The TurboQuant algorithm works by compressing the key-value cache, a digital "cheat sheet" that stores important information so it doesn't have to be recomputed. By reducing the size of this cache, Google's algorithm can improve the performance of LLMs without compromising their accuracy. In some tests, TurboQuant has shown an eight-fold increase in performance and a six-fold reduction in memory usage.

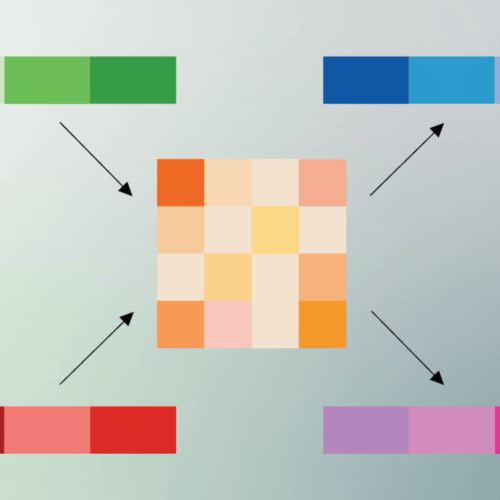

Developers employ quantization techniques to make models smaller and more efficient, but these methods often come with a trade-off in quality. TurboQuant, however, achieves high-quality compression without sacrificing accuracy. The algorithm involves a two-step process, with the PolarQuant system converting vectors into polar coordinates to reduce their size.

The PolarQuant system is a crucial component of TurboQuant, as it allows for high-quality compression of vectors. By converting vectors into polar coordinates, PolarQuant reduces the amount of information required to represent them, making it possible to achieve significant compression without compromising accuracy.

💡 NaijaBuzz TakeGoogle's TurboQuant algorithm is a game-changer for the tech industry, particularly for Nigerian startups that rely on LLMs for their applications. Companies like Paystack and Flutterwave can benefit from this technology, as it enables them to deploy more efficient and cost-effective models. With TurboQuant, developers can create more sophisticated AI models without breaking the bank, opening up new possibilities for innovation in the African tech space.